.jpg)

Identified as a classification problem, this gender detection and age prediction project will put both your machine learning and computer vision skills to the test. The goal is to build a system that takes a person’s image and tries to identify their age and gender.

For this project, you can implement convolutional neural networks and use Python with the OpenCV package. You can grab the Adience dataset for this project. Factors such as makeup, lighting and facial expressions will make this challenging and try to throw your model off, so keep that in mind.

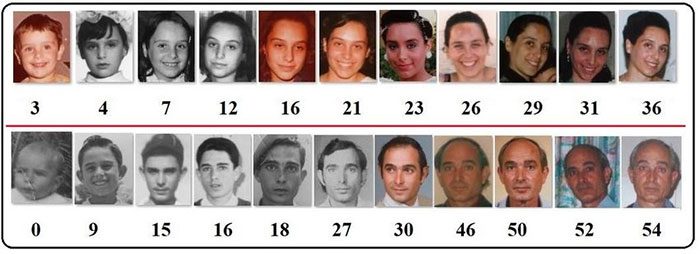

Age detection is the process of automatically discerning the age of a person solely from a photo of their face.

Typically, you’ll see age detection implemented as a two-stage process:

For Stage #1, any face detector capable of producing bounding boxes for faces in an image can be used, including but not limited to Haar cascades, HOG + Linear SVM, Single Shot Detectors (SSDs), etc.

Exactly which face detector you use depends on your project:

When choosing a face detector for your application, take the time to consider your project requirements — is speed or accuracy more important for your use case? I also recommend running a few experiments with each of the face detectors so you can let the empirical results guide your decisions.

Once your face detector has produced the bounding box coordinates of the face in the image/video stream, you can move on to Stage #2 — identifying the age of the person.

Given the bounding box (x, y)-coordinates of the face, you first extract the face ROI, ignoring the rest of the image/frame. Doing so allows the age detector to focus solely on the person’s face and not any other irrelevant “noise” in the image.

The face ROI is then passed through the model, yielding the actual age prediction.

There are a number of age detector algorithms, but the most popular ones are deep learning-based age detectors — we’ll be using such a deep learning-based age detector in this tutorial.

The deep learning age detector model we are using here today was implemented and trained by Levi and Hassner in their 2015 publication

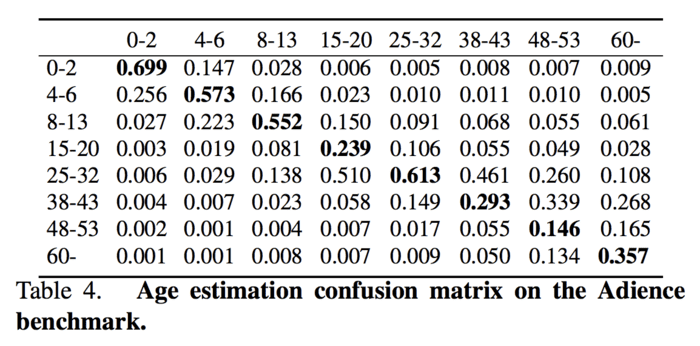

In the paper, the authors propose a simplistic AlexNet-like architecture that learns a total of eight age brackets:

You’ll note that these age brackets are noncontiguous — this done on purpose, as the used to train the model, defines the age ranges as such (we’ll learn why this is done in the next section).

We’ll be using a pre-trained age detector model in this post, but if you are interested in learning how to train it from scratch, be sure to read where I show you how to do exactly that.

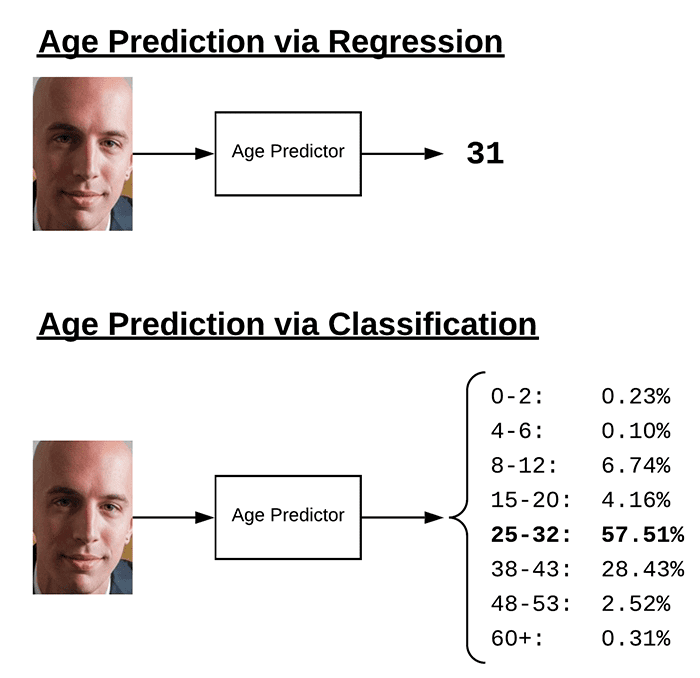

You’ll notice from the previous section that we have discretized ages into “buckets,” thereby treating age prediction as a classification problem — why not frame it as a regression problem instead

Technically, there’s no reason why you can’t treat age prediction as a regression task. There are even some models that do just that.

The problem is that age prediction is inherently subjective and based solely on appearance.

A person in their mid-50s who has never smoked in their life, always wore sunscreen when going outside, and took care of their skin daily will likely look younger than someone in their late-30s who smokes a carton a day, works manual labor without sun protection, and doesn’t have a proper skin care regime.

And let’s not forget the most important driving factor in aging, genetics — some people simply age better than others.

For example, take a look at the following image of Matthew Perry (who played Chandler Bing on the TV sitcom, Friends) and compare it to an image of Jennifer Aniston (who played Rachel Green, alongside Perry):

Could you guess that Matthew Perry (50) is actually a year younger than Jennifer Aniston (51)?

Unless you have prior knowledge about these actors, I doubt it.

But, on the other hand, could you guess that these actors were 48-53?

I’m willing to bet you probably could.

While humans are inherently bad at predicting a single age value, we are actually quite good at predicting age brackets.

This is a loaded example, of course.

Jennifer Aniston’s genetics are near perfect, and combined with an extremely talented plastic surgeon, she seems to never age.

But that goes to show my point — people purposely try to hide their age.

And if a human struggles to accurately predict the age of a person, then surely a machine will struggle as well.

Once you start treating age prediction as a regression problem, it becomes significantly harder for a model to accurately predict a single value representing that person’s image.

However, if you treat it as a classification problem, defining buckets/age brackets for the model, our age predictor model becomes easier to train, often yielding substantially higher accuracy than regression-based prediction alone.

Simply put: Treating age prediction as classification “relaxes” the problem a bit, making it easier to solve — typically, we don’t need the exact age of a person; a rough estimate is sufficient.

One of the biggest issues with the age prediction model trained by Levi and Hassner is that it’s heavily biased toward the age group 25-32, as shown by the following confusion matrix table from

That unfortunately means that our model may predict the 25-32 age group when in fact the actual age belongs to a different age bracket — I noticed this a handful of times when gathering results for this tutorial as well as in my own applications of age prediction.

You can combat this bias by:

Secondly, age prediction results can typically be improved by using face alignment.

Face alignment identifies the geometric structure of faces and then attempts to obtain a canonical alignment of the face based on translation, scale, and rotation.

In many cases (but not always), face alignment can improve face application results, including face recognition, age prediction, etc.

As a matter of simplicity, we did not apply face alignment in this tutorial, but you can to learn more about face alignment and then apply it to your own age prediction applications.

I have chosen to purposely not cover gender prediction in this tutorial.

While using computer vision and deep learning to identify the gender of a person may seem like an interesting classification problem, it’s actually one wrought with moral implications.

Just because someone visually looks, dresses, or appears a certain way does not imply they identify with that (or any) gender.

Software that attempts to distill gender into binary classification only further chains us to antiquated notions of what gender is. Therefore, I would encourage you to not utilize gender recognition in your own applications if at all possible.

If you must perform gender recognition, make sure you are holding yourself accountable, and ensure you are not building applications that attempt to conform others to gender stereotypes (e.g., customizing user experiences based on perceived gender).

Due to the rise of social platforms and social media nowadays, there is also an increase in the number of applications that want automatic age and gender classification. As we know, age and Gender are two key facial attributes that play a very important role in social interactions.

So, in this deep learning project. we will be creating real-time gender and age detection using Deep Learning, and also in this, we will be using pre-trained models that classify the gender and age of the person. So the model will predict the gender as ‘Male’ and ‘Female’, and the predicted age will be in one of the following ranges- (0-2),(4-6),(8-12),(15-20),(25-32),(38-43),(48-53),(60-100) ( so there are 8 nodes in the final output layer or say softmax layer).

It is very difficult to predict the exact age of the person due to many reasons like (makeup, light, facial expressions, etc.) so that’s why we have considered this as a classification problem instead of a regression problem.

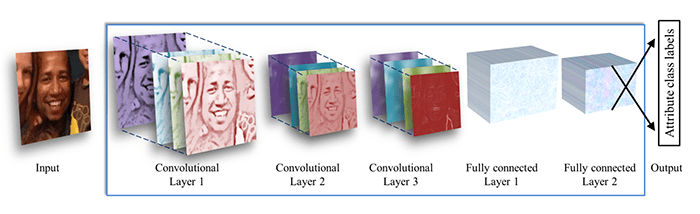

The model is a CNN model. CNN is a Convolutional Neural Network. It is a type of deep neural network (DNN) that is very popular in classification problems, and also widely used for the purposes of image recognition and processing. CNN Model consists of the input layer, multiple hidden layers, and at last output layer.

So the CNN Model that is created for this project consists of 3 convolutional layers. The first convolutional layer has 96 nodes with kernel size 7, second convolutional layer has 256 nodes, with kernel size 5, and third layer has 384 nodes with kernel size 3.

It also has 2 fully connected layers, each with 512 nodes, and a final output layer of softmax type.

Note : Find the best solution for electronics components and technical projects ideas

keep in touch with our social media links as mentioned below

Mifratech websites : https://www.mifratech.com/public/

Mifratech facebook : https://www.facebook.com/mifratech.lab

mifratech instagram : https://www.instagram.com/mifratech/

mifratech twitter account : https://twitter.com/mifratech

Contact for more information : [email protected] / 080-73744810 / 9972364704